Purpose of this guide

The Production AI Playbook introduces the patterns and capabilities teams use to build production AI systems with n8n. It reflects lessons learned from teams integrating AI into real operational systems, where reliability, governance, and maintainability matter as much as model capability.

The sections that follow explore how to combine deterministic automation with AI, design scalable agent architectures, maintain human oversight, monitor performance, and operate AI workflows reliably in production.

Find out when new topics are added via RSS, LinkedIn or X.

n8n’s workflow architecture

n8n is a node-based workflow automation platform where composable nodes chain together into execution pipelines. Workflows orchestrate data movement, system integrations, business logic, and AI steps in one place. This architecture makes it easier to visualize, explain, and control how automation systems operate.

n8n is source-available under a fair-code license, which means you can inspect the codebase, self-host on your own infrastructure, or use n8n's managed cloud. Teams that need full control over data residency and deployment can run n8n within their own environment.

AI as part of deterministic workflows

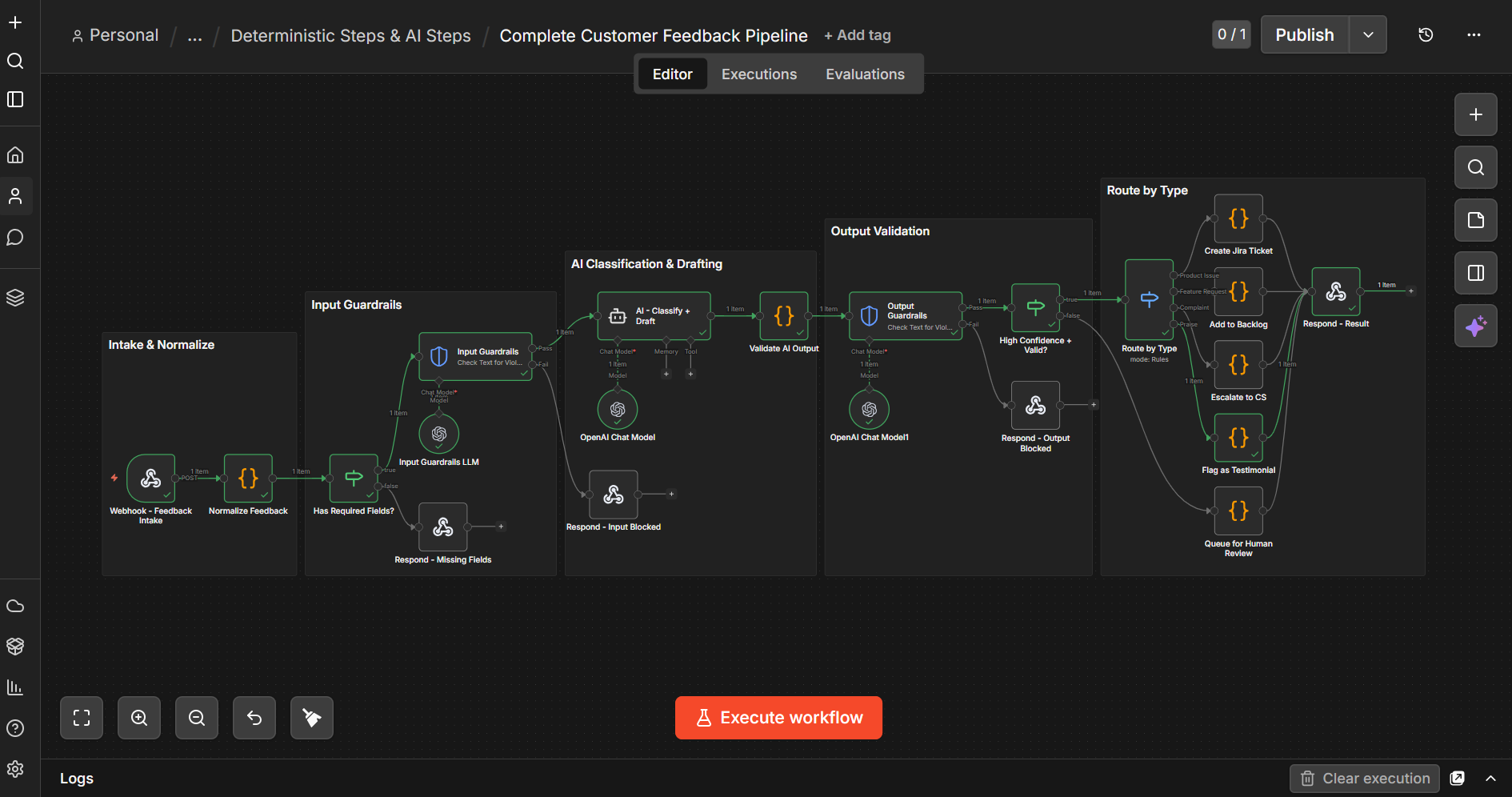

In n8n, AI operates alongside deterministic automation rather than replacing it.

Workflows can prepare data before AI steps run and apply validation, routing, or logic afterward. This allows teams to structure how AI is used inside a system and control how outputs affect downstream actions.

Common patterns include:

- preparing and structuring inputs before sending them to a model

- validating or formatting outputs before passing them to other systems

- routing results based on conditions or AI responses

- combining AI-driven decisions with rule-based logic

This structure helps keep AI outputs grounded in clean inputs and predictable system behavior, while allowing teams to apply AI selectively so models run only where they add value.

Agents and tools within workflows

Agents in n8n operate as components within workflows rather than standalone systems. The AI Agent node connects a model, memory, and tools, but its effectiveness comes from the workflow around it. Workflows control what information the agent receives, what tools it can use, and how its responses are handled.

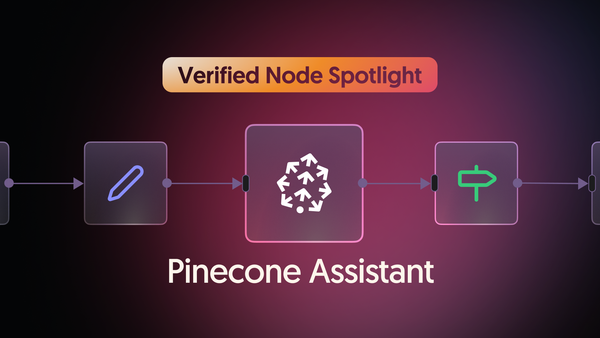

Because production AI systems rarely operate in isolation, agents can connect to the systems you already use: APIs, databases, internal services, and external tools. n8n's ecosystem includes over 500 built-in nodes alongside 500+ vendor-maintained integrations covering services like Salesforce, Jira, Slack and Postgres. This allows teams to expand agent capabilities over time by adding nodes rather than redesigning their architecture.

Security and credentials

n8n stores credentials encrypted and injects them at execution time, so secrets are never exposed in workflow definitions. For teams with strict compliance requirements, self-hosting gives you full control over where data is processed and stored. Role-based access controls let you manage who can view, edit, or execute workflows across your organization.

Core concepts in n8n

The following concepts appear throughout this guide and form the foundation of how workflows operate.

| Concept | Description |

|---|---|

| Nodes | Individual steps in a workflow that perform a specific action, such as calling an API, transforming data, or running an AI task. Think of them like functions in a pipeline with defined inputs and outputs. |

| Workflows | Execution pipelines that chain nodes together and define how automation runs. |

| Sub-workflows | Reusable workflows that can be called from other workflows. They allow complex automation or agent logic to be broken into smaller, testable components. |

| Items (execution data) | Structured data objects that flow through workflows. Nodes process items and output new ones, allowing workflows to handle single events or collections of data. |

| Expressions & variables | Dynamically reference data from earlier steps to populate parameters, prompts, or configuration values. |

| Conditional routing | Branching logic using nodes like IF or Switch to route data based on conditions or AI outputs. |

| Loops and iteration | Process collections of items or repeat operations across multiple records. |

| Triggers & webhooks | Start workflows from external events, HTTP requests, or scheduled triggers. |

| Credentials | Securely stored authentication for APIs and services, encrypted at rest and injected at execution time. |

What this playbook covers

Building an AI workflow that works once is different from running AI systems reliably in production. The rest of this guide explores the patterns and capabilities teams use to move from experimentation to production AI systems, including:

- combining deterministic automation with AI-driven steps

- designing modular multi-agent architectures

- extending agent capabilities with tools and integrations

- maintaining human oversight of critical AI decisions

- evaluating and monitoring AI performance

- tracing and auditing AI behavior

- deploying and scaling AI workflows in production

New sections are published on a rolling basis. You can follow along via RSS, LinkedIn or X as each topic is added.

Together, these sections show how to design AI systems that remain understandable, controllable, and adaptable as they grow. Throughout this guide, we focus on practical architecture patterns rather than specific tools or models, so the systems you build remain adaptable as models, providers, and capabilities continue to evolve.

This playbook was written by Elvis Saravia, co-founder of DAIR.AI and AI researcher specializing in natural language processing and language models. We're thankful to have his expertise as a technical educator shaping this series, combining real architecture patterns with hands-on templates readers can build with immediately.